In this case, we’re training an AI agent to learn a policy for choosing factory floor parameters with the goal of optimizing product cost.

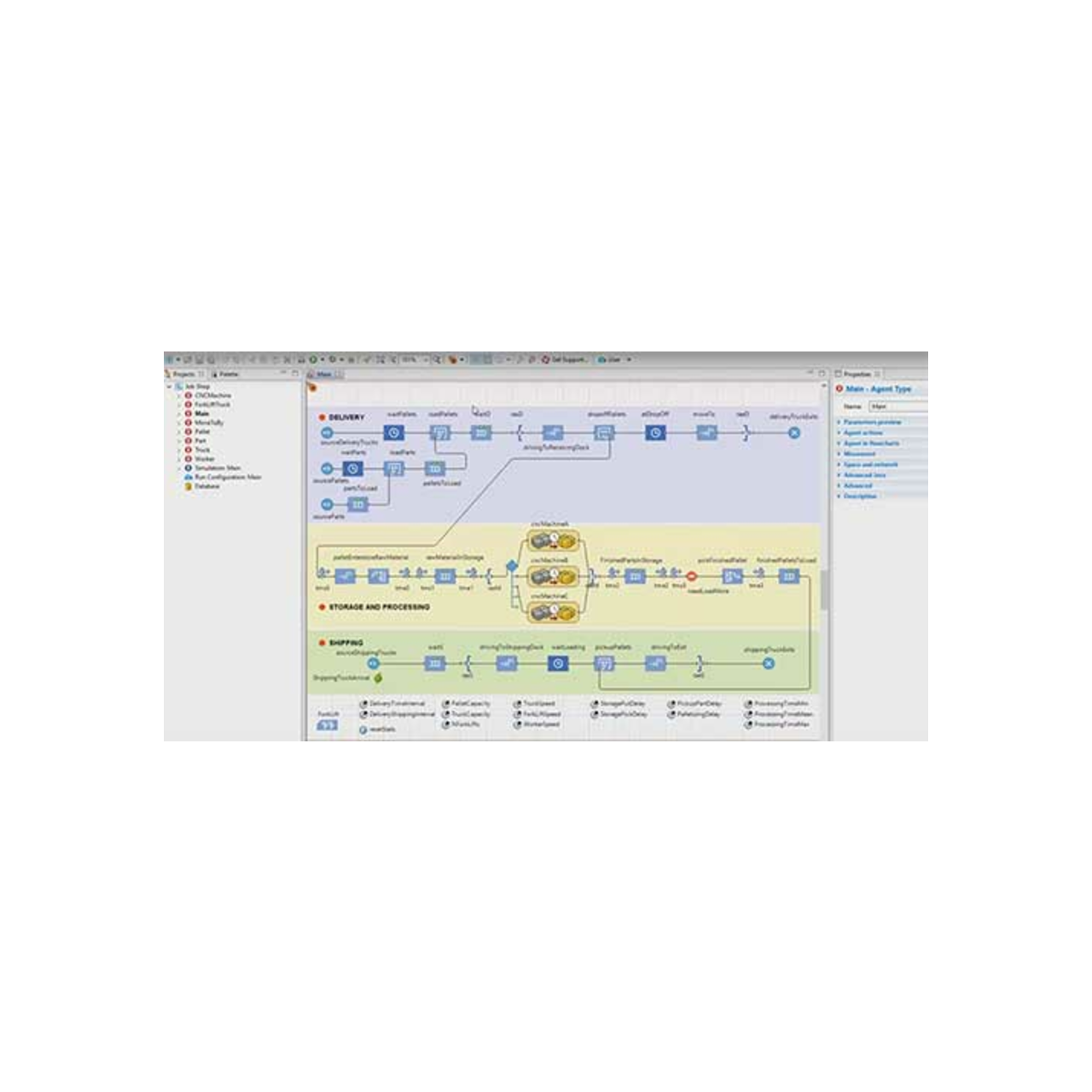

Cost accumulated by a product is broken down into several categories for analysis and optimization. Each incoming product seizes some resources, is processed by a machine, conveyed, and releases the resources. Let’s look at an example: Activity Based Costing Analysis (ABCA)Ī simplistic factory floor model where cost associated with product processing is calculated and analyzed using activity-based costing (ABC). Ideally this system is somewhat random and dynamic, which makes a reward-based learning approach superior compared with other traditional control theories. If you’re not familiar with RL, it’s based on the idea of framing problems as a Markov decision process in which an AI agent learns a control policy to always pick the best possible action for a given state of the system. Anylogic price how to#Over the past two years, we have continued to invest in our partnership with AnyLogic, and have learned more about how to use multi-method models with a deep reinforcement learning service such as ours. After being acquired by Microsoft in 2018, Bonsai recently launched as a fully integrated Azure service at Microsoft’s Build developers conference. It worked! It easily beat our co-workers when playing the game in real time and enabled our sales team to sign our first supply chain customer.įast forward to May 2020. I found a simulation model of the Beer Distribution Game on AnyLogic’s cloud platform and connected it to our deep reinforcement learning service to see if we could teach an AI agent to learn a successful control policy for one of the standard models taught in business school when supply chains are covered. This goes back to the question: What is a controller? Is a supply chain or any other business process a controller? If so, could we make these controllers more intelligent? With a vision of developing an AI toolchain that enables engineers to add intelligence to their existing and future systems, we started to take a broader view of what might be possible. At Bonsai, we accelerated that trend and focused on teaching AI agents how to become more intelligent controllers for advanced control problems where traditional approaches may have shortcomings.

Soon thereafter, examples of simple physics-based models were added, either as simple robotic systems or games that took advantage of built-in physics engines. Then, OpenAI enabled users with a library of example environments that could be used to learn more about RL and increase performance of the latest RL algorithms. Bonsai chose reinforcement learning (RL) as the first AI category to support, as we believed it could enable new use cases for automation and create significant value for future customers.īefore then, reinforcement learning had mostly received attention for teaching AI agents how to play games like Pong and other Atari classics. The idea was to enable subject matter experts to use their knowledge of a particular problem to teach the AI agent how to make decisions about it, or to create an optimal control policy. Four years ago, I joined the startup Bonsai, which envisioned a new way of training AI agents.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed